You Don't Need to Train a Model to Make It Smarter

The pace of increase of AI capabilities has been startling for the past year. Normally, when we see a big benchmark jump, our assumption is that the labs have done more to make the models smarter, but increasingly that’s not the case.

A striking result from last month is of Gemini 3 Deep Think. Gemini 3 Deep Think scores 84.6% on the ARC-AGI-2 reasoning benchmark, compared to Gemini 3 Pro’s 31.1%. The remarkable thing to me is that these use the same pre-training model. The main difference is what happens around the model call: how many paths it explores, and how it validates its exploration. This is the harness layer, and is where a huge amount of capability gain is happening right now. (Deep Think does have some additional post training compared to Gemini 3 Pro, but this is in order for the model to be more successful at using the harness - more on this later.)

Let’s take a look at the different parts of this taxonomy. When you send a message to an AI, there are three layers of work that determine the quality of what you get back, which each happened at a different point in the model’s lifecycle.

Pretraining. The base LLM model was trained on a vast corpus of text. Given a sequence of input, the model predicts what comes next based on patterns from what it’s seen before. Roughly speaking, LLMs “got good” around 2020 with the release of GPT-3. That’s when the world took notice as models went from toy to useful.

Post-training. The pre-trained model is then refined through supervised learning and reinforcement learning for helpfulness, safety, and for anything else where we can give it feedback at scale. The original post-training technique was RLHF: reinforcement learning with human feedback, which was used to make the models friendly and helpful: the foundation of ChatGPT’s assistant persona (late 2022). Post-training saw further gains as it moved on to AI generated rather than human feedback. Reasoning-specific post-training, which uses verifiable rewards (“correct answers” for problems in domains where such a thing exists) started to show results in late 2024, allowing models like OpenAI o1 to show advances in maths and coding.

Harness. This is what I’m calling everything that happens at call time, when we actually use the model. Back in the day (if I can refer to 2020 as such) people started exploring this with prompt engineering. The harness layer encompasses the context we feed the model, the structure that we encourage it to use for reasoning, and anything to do with orchestrating multiple LLM calls rather than hoping to get the answer out of a single one.

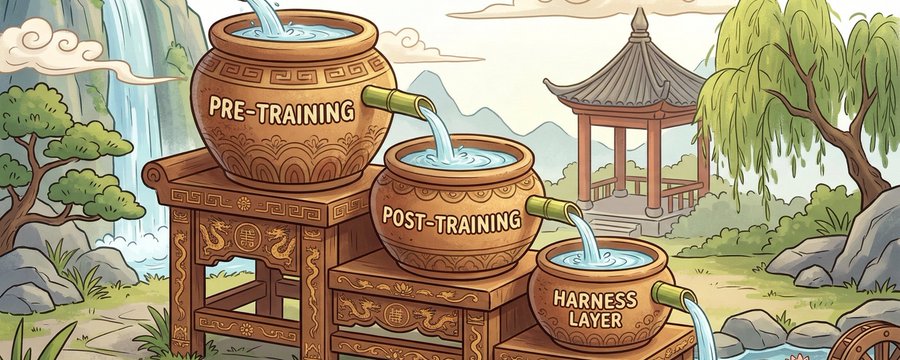

These three layers have been on their own separate staggered S-curves of improvement. To start with, all of the gains were in pre-training. It’s only when the pre-training models got good enough that the post-training started to be worth doing. Today, the harness layer S-curve is hitting the steepest part.

I think of it as a sequence of connected buckets, akin to an ancient chinese water clock: each level has to fill up enough before the next one starts receiving water. The pre-training and post-training buckets have filled up enough that the harness layer bucket is finally filling with substantial speed.

People have been working on “harness improvements” since there were LLMs. In the beginning there was prompt engineering. A couple years down the line in 2022, early harness techniques for reasoning included the chain of thought model, allowing multiple LLM runs to chain together, and the infamous zero-shot “let’s think step by step” prompt, which surprised many of us with how well it worked.

For a while, it was an observation that the models were not yet good enough for complex multi-call chaining to be consistently helpful. Sometimes you just end up compounding errors on top of each other. As of 2025, finally the models are reliable enough that this is no longer true. Hence why we’re now seeing such great results out of techniques like Deep Think.

This matters a lot for us independent AI practitioners, because while pre-training and post-training are the exclusive domains of the major AI labs, we outsiders have a lot of agency over the harness layer. We engineer orchestration and reasoning solutions that change what information goes into each LLM call and how we chain LLM calls together. We can’t necessarily get as astonishing results as the big labs because we don’t have access to harness-specific post-training, but there is a big slice of the pie available nevertheless.

Here are three domains of harness layer techniques that have been on my mind. Each of these are opportunities for practitioners to get better results out of our LLMs.

1. Context engineering

Single LLM calls are powerful, but the model can only work with what’s in its context, which is a limited length, and performance degrades with long contexts. When we’re working with coding agents in big code bases, what gets loaded into the model’s context becomes very important to the model’s success. We want the model harness to include relevant context and exclude irrelevant context as much as possible.

One example of an interesting piece of context engineering research came out of Vercel earlier this year. The Vercel team were studying the agent skills technique. Agent skills encode task-specific knowledge for working in particular areas, and use progressive disclosure to give the agents more information if they decide they need it. Although skills are the accepted wisdom for context-specific context (ha), Vercel’s finding was that skills were much less effective than they thought. The agent did not invoke skills reliably - it had access to the documentation but didn’t choose to use it. Without explicit instructions, their agents scored no better with skills available than without - 53% on their benchmark. With explicit instructions to use the skills, this went up to 79%. And the explicit instructions were fragile - changes in the wording on instructing agents to invoke the skill gave big changes in the effectiveness of whether the agents did the right thing.

Surprisingly, the most reliable technique in Vercel’s evaluations was simply to passively include all of the context that was needed in the claude.md/agents.md file - this scored a 100% pass rate on their eval. The main problem was that this was a huge amount of data, so they tried compressing it - unexpectedly, they were able to compress the context injection by 80% while maintaining the same pass rate. This suggests that selective but deterministic context injection could be a viable alternative to the agent skills paradigm.

This is far from the only successful approach to context engineering out there, and I’m very excited to see many companies doing novel research in this space.

2. Structured reasoning

When we ask an LLM to reason, the default is an unstructured chain of thought: the model produces something that looks like reasoning but each step does not necessarily follow logically from the next. “Let’s think step by step” was the original intervention here, and while it is helpful, it doesn’t force rigour. There is then an opportunity in imposing more formal structure on how the model performs reasoning within a single call, by requiring it to show its working in an auditable way.

A recent example from Meta researchers introduces what they call “semi-formal reasoning”. Instead of letting the model reason freely, they provide structured templates that act as “certificates”: the model must state explicit premises, trace execution paths through the code, and derive formal conclusions. The structure asserts correctness. The team evaluated this on patch equivalence: determining whether two piece of code do the same thing. Eval accuracy went from 78% to 88% on curated examples and reached 93% on real-world agent-generated patches.

It’s very interesting to me that there’s still further gains to be made in the same category of intervention as the original “let’s think step by step”. And designing reasoning templates that enforce rigour is potentially something any practitioner can innovate on, especially if you are working on something domain specific.

3. Multi-call orchestration

From the invention of “chain of thought” onwards, there has been a lot of research into how best to combine multiple LLM calls so that they can build on each other’s work to achieve better results.

There are many techniques advancing the frontier of multi-call orchestration. This is where Deep Think’s 31% to 84.6% jump on ARC-AGI-2 comes from. Google has not publicly released the exact algorithm for Gemini 3 Deep Think, but it is described as using parallel calls to explore multiple hypotheses. In addition, Google’s Aletheia maths research agent wraps DeepThink in an additional agentic loop, which verifies candidate solutions and decides whether they can be revised into something that works, or is totally flawed and needs to be discarded to start over. This approach allows Alethia to be used to advance research-level mathematics.

Part of Deep Think’s success is due to harness-specific post-training: reinforcement learning specifically designed to make the model good at reasoning in loops. This may at first sight seem like a major barrier to anyone not in a major lab. However, we would expect the reasoning improvements resulting from this kind of post-training to be generalisable to other kinds of harnesses, not just the specific Deep Think configuration.

And not knowing the specific Deep Think setup is not a barrier to experimentation. The most basic example is majority voting: run the same prompt multiple times and take the most common answer. Research shows this captures a surprisingly large share of the gains compared to more sophisticated multi-agent approaches. Another straightforward pattern is the building block of Alethia: generate-verify-revise loops with separate LLM calls. In a domain like software engineering we can use external grounding like test suites and linters as deterministic verification steps, and decompose problems into steps that get verified independently.

DeepSeek published a particularly elegant version of this in their V3.2 work: one LLM generates a proof, a second LLM verifies it, and a third LLM checks whether the verifier is doing its job properly.

Crucially, multi-agent patterns are just multiple API calls linked together - if we have a particular domain-specific tweak we want to try to the frontier techniques, we don’t have to be in a big lab to experiment with them.

2026 - the year of the harness layer?

The harness layer is having its moment. We’ve been doing prompt engineering since GPT was invented, but the magnitude of accessible gains is now catching up to the gains made at pre-training and post-training. Context engineering, structured reasoning, and multi-call orchestration are all producing results that would be considered astonishing a year or two ago.

The big labs will keep pushing pre-training and post-training forward. But the harness layer is where the rest of us get to play: and it’s finally hit the steep part of the curve. So for us independent practitioners, working on improving what AI produces in a specific domain context, this is where to invest effort in 2026.

Originally published as a article on X. Follow Yanqing for more on AI coding and software quality.