Why is Your Codebase Like This?

“Why is it like this?”

The most frustrating five words in software engineering (with the possible exception of “it works on my machine”). I used to mutter this at my code, at other people’s code, and increasingly now at code that “I” wrote with a team of AI agents just last week.

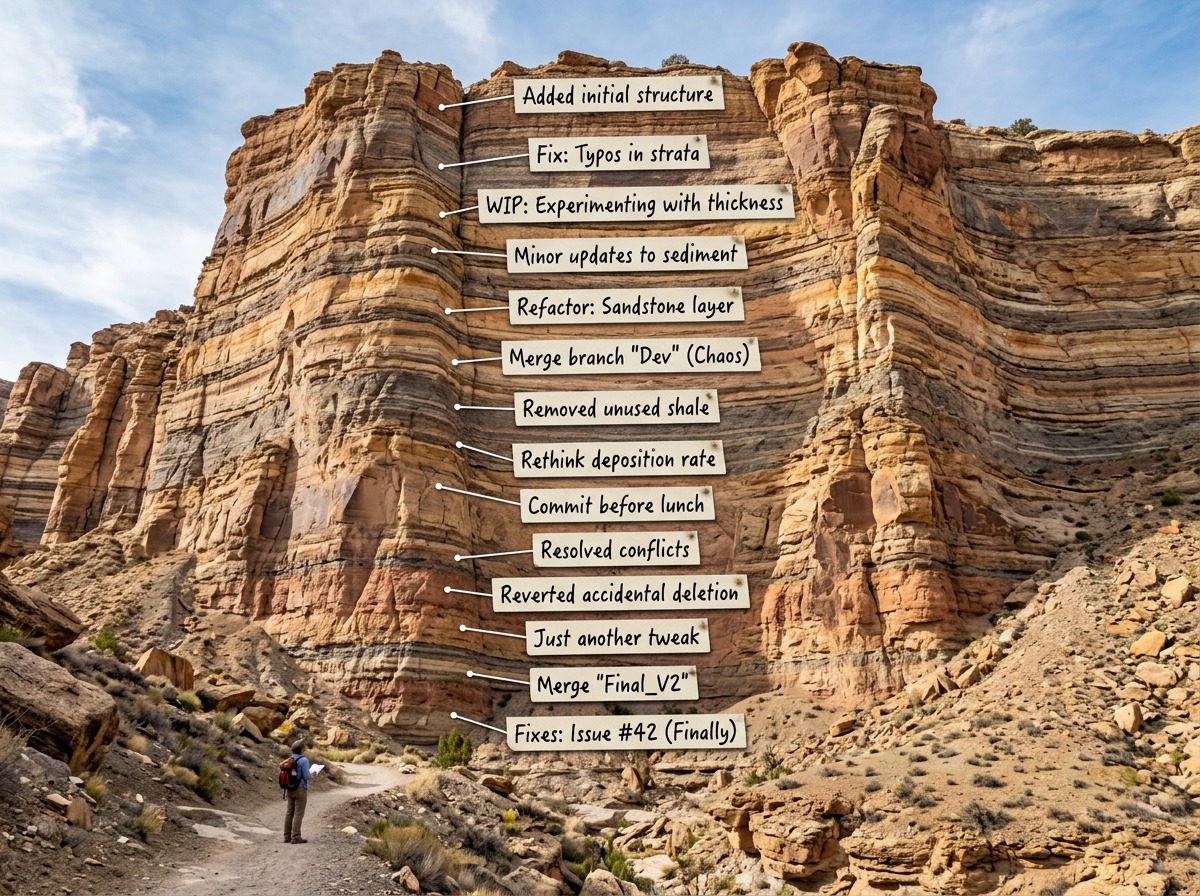

The git log says what changed. If you’re lucky there’s a design or spec doc somewhere. Nobody recorded why.

The rambly intro

I’ve been working with Claude Code on a complex project recently, and I had set up a fairly deliberate workflow for it. When it builds a new enhancement, I have it create a high-level design document first, then review that document (saving the marked-up copy). Then create an implementation plan, review that too. I ask it to explicitly record why it’s making the decisions it’s making, and to run a devil’s advocate check against its own reasoning. Then it records where it’s made changes coming out of those decisions, and then it implements.

This is probably overkill. It might be overkill. It’s not overkill - it’s great for getting more better quality code out of your context windows. But today I want to talk about the awesome side-effect that makes me think it’s so worth it everyone should do this always.

A couple of months in, I (Ok Claude, and then we talked about it) hit a bit of code that was doing something… odd. Not obviously broken, but the kind of thing where you look at it and you can’t tell if it’s clever-on-purpose or just a mess. I didn’t want to “fix” it and break something subtle, but I also couldn’t work out what it was actually doing by just reading it, and Claude was confidently changing its mind every time I asked another question.

So I asked Claude to go and properly explore the repo, including all those design documents and decision logs I’d been making it create, and come back and tell me what was going on.

And it came back with something like: “Right, so originally this area was solving problem A. But then we needed to handle problem B as well, and the current implementation is where those two concerns intersect. That’s why it looks weird. If we change it, we need to make sure we don’t break either of those two things.”

I could have eventually worked that out by reading the code carefully. Maybe. Claude demonstrably couldn’t. But having the reasoning already recorded, from the point where the decisions were actually made? That was fast, it was accurate, and it meant I could change things with confidence instead of with crossed fingers.

This is not just “good documentation”

OK, I hear you. “Record your decisions” is not exactly a hot take. We’ve all been told to write better commit messages and keep ADRs and document our architecture decisions and so on. And most of us don’t do it consistently, because in the moment it feels like overhead and we’re busy and we’ll remember why we did it this way (for at least 30 seconds).

But I think there’s something specific happening here that goes beyond normal documentation, and it’s to do with how AI-assisted development is changing things.

In AI research, there’s a whole field called interpretability - working out why a model produces the output it does from a given input. What’s going on inside the forward pass. It’s important work. But it’s focused on understanding a single call to a model.

What I’ve been bumping into is a different problem. Agentic coding workflows aren’t one call - they’re dozens or hundreds of calls, orchestrated together, with decisions feeding into decisions feeding into more decisions. The entire “plan, review, implement, test, iterate” workflow is one chain, and I often have several chains going in parallel. And the question “why did this system end up looking like this?” can’t be answered by understanding any single step. You need to understand the whole trail of decisions.

I’ve been calling this systemic provenance in my head, which is a bit dry but accurate. It’s the traceable history of why a system is as it is, at the level of the whole decision-making process rather than any individual call within it.

(In my head it’s also kinda “decision archaeology” which is what we used to do rather more slowly back in the old human days, but the goal here is that we don’t have to go and do archaeological digging, because nobody burns down the library of Alexandria.)

Why AI makes this both worse and more fixable

Human software engineers have always been bad at recording their reasoning. We know we should. We mostly don’t. The decisions live in people’s heads, in Slack threads that nobody will ever search, in meeting notes that might as well not exist, in the shared tacit knowledge of the team where it slowly drifts out of our context windows without us noticing.

AI agents make this problem worse, because (a) they don’t have memory between sessions and (b) they can generate enormous amounts of code very quickly. You can accumulate a terrifying number of unexplained decisions in an afternoon. A human developer who wrote three weird functions at least has the option of explaining them. An AI agent that wrote thirty? The reasoning is gone the moment the session ends (not that that will prevent it later happily making up some plausible rubbish for you).

But (and this is the bit that’s exciting) AI agents also make the problem more fixable. Because unlike human developers, if you tell an AI agent “create a design document before implementing, review it, run a devil’s advocate check on your own plan, for every significant decision and review markup, record a summary of your decision reasoning” - it will actually do it. Every time. Without the passive-aggressive sigh. Without gradually letting the practice slide after a month or two.

And the repo becomes self-explaining. Not because someone went back and documented everything after the fact, but because the reasoning was captured at the point the decisions were made.

What you can actually do here

I’m not going to pretend I’ve got this perfectly figured out. But the workflow I described above (design doc, review, decision log, then implement) has been working well enough that I’m now annoyed when I work on projects where I didn’t have that from the start.

The thing that makes the biggest difference, I think, is recording three things alongside your changes:

What problem were you actually solving? Not the Jira/Linear/Whatever you’re using ticket, but the actual real problem. What else did you consider, and why did you decide against it? And what assumptions are baked into this solution that, if they changed, would mean this code needs to change too?

That last one is sneakily the most valuable. Because code doesn’t usually rot from lots of editing. Rather, what happens is the assumptions it was built on stop being true, and nobody remembers what those assumptions were.

If you’re using an AI coding agent, build this into how you work with it. It’s trivially easy to add to your instructions and the agent won’t mind. If you’re working with humans… well, good luck. But at least you know what you’re aiming for.

The bigger picture (briefly, I promise)

I think there’s a gap in how we think about AI-assisted systems. We have interpretability for understanding what happens inside a single model call. We have version control for tracking what changed in code. But we don’t really have a discipline for understanding why a system built through many AI-assisted decisions ended up as it did. The reasoning layer - the provenance of the decisions - is largely missing.

I don’t have a grand solution for this. But I do think that anyone building seriously with AI coding agents should be thinking about it, because the alternative is codebases that grow fast and become opaque even faster.

The Bottom Line

Tell your AIs to rigorously record the why, not just the what. Your future codebase will be less mysterious and your future self will be less confused. And if someone tells you this is just version control with extra steps, ask them to explain from their git log alone why their authentication module has that weird interface.

Originally published on Edmund Pringle’s Substack. Follow Ed for more on software quality and engineering leadership.