There Is No Mainline User

In the 1920s, the US Air Force designed cockpit seats around the dimensions of the average pilot. The cockpit kept causing accidents, and in 1952, Lt. Gilbert S. Daniels at the Wright Air Development Center was tasked with finding the root cause.

Daniels measured 4,063 active pilots across 140 body dimensions, took the ten most relevant for cockpit fit, and defined “average” as the middle 30% on each axis - a fairly generous window. Then he counted how many pilots fell inside the average band on all ten dimensions at once.

You’ve probably heard this story before. The answer was zero.

The average pilot was a statistical artefact. The cockpit that was meant to be for the “typical pilot” actually worked for none of them. Daniels’s recommendation was to stop designing for the average and design adjustable instead. This is why the seats you sit in today - car, office, fighter jet - have levers.

Specifying typical user flows is like measuring the average pilot

When you build software, you code against the expected path through your software for the classes of user you imagine. The user wants to create a new account, so they click the menu button, then “Create account”, then they type in their desired username, email address and password and hit “Register”…

You might describe these as the mainline user flows.

These mainline user flows are like the average pilot. They don’t exist in the real world.

Every real user, at some point, is going to deviate from your mainline. At some point, everyone is going to do something that hits an “edge case”. Maybe they deviate in the way they interact with your product: They accidentally open two of the same tab at once. They hit back when they shouldn’t. They make a typo in their password. They leave the tab open too long and the computer goes to sleep. Or they’re following the mainline flow, but in an unusual context. The power user who never touches the mouse. The screen-reader user. The person who’s currently on a train going between signal hotspots every 2 minutes. The parent using your page one-handed on their phone because they’re holding a baby.

Every edge case is somebody’s mainline experience from their seat. And when the seat doesn’t fit them, they stop coming back. It’s like the opposite of the swiss cheese model - in every place in the stack there is a hole for some particular slice of the cheese. If you’re only coding to the mainline user spec, at some point you will drop every user.

(This goes for your team’s internal flows as well as customer ones. Every developer has their own development setup, and their own quirks of debugging. Coding to a specification of the team’s workflows will accumulate many unexpected friction points.)

”Write the spec” makes a presumption of legibility

There are a lot of people who answer this problem by saying we need a better spec. If we can just capture what the users actually do in every minute detail up front, then we can cover every edge case.

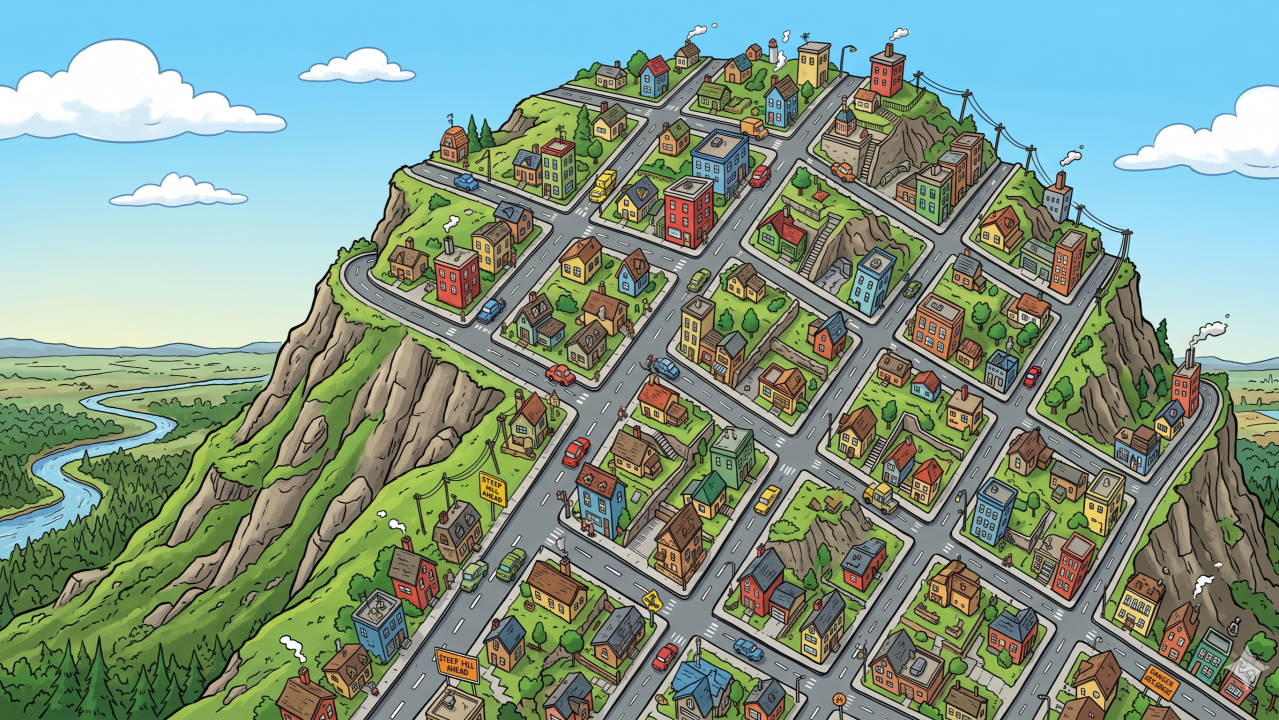

James C. Scott’s Seeing Like a State is about why high-modernist projects - scientific forestry, planned cities, collectivised agriculture - failed across the 20th century. These projects failed because the planners could only act on what was legible to them. They did not have access to local, situated knowledge learned from lived experience: what foresters knew about which trees grew where, what farmers knew about which patch of soil tolerated which crop. This contextual knowledge which Scott calls mētis was illegible to the planner, so it was ignored or overwritten in favour of that which is describable, mappable and countable. This is how you end up with grid shaped cities on slopes that make the straight roads un-usable.

A software spec is a legibility project. It captures what can be written down. But what makes a product feel right - to users, to the team that maintains it, to stakeholders - is full of mētis. You can interview every prospective user for a month and still write the wrong spec, because they don’t know that the thing they’re taking for granted matters until they meet your product and something feels off.

We know much more than we can tell

So if we can’t capture the nuances of user behaviour, how is it that we ever write working software in the first place?

A useful angle on this is Polanyi’s tacit knowledge: we can know more than we can tell. A skilled cyclist can’t write a spec for cycling, but she can ride, and she can tell you if a bike path doesn’t work for her. Your dev team have lots of tacit knowledge about user behaviour, because they too are users of software. They also have tacit knowledge in the form of accumulated wisdom from years at their craft - a front end engineer knows what “good UI” looks like even if they can’t describe it perfectly to a machine.

But tacit knowledge is more than just illegible, it can be actively anti-legible. If a skilled developer tried to write a description of “how to write good software”, you get a low-resolution projection of a high-dimensional thing. More frustratingly, that resolution is lowest exactly where the human is most capable. Like a fish describing water, the things you know best are most invisible to you.

The gap between spec and user experience

So for now, the gap between spec and a product that feels right is something every software project has to contend with. This isn’t a “try harder” problem - it’s not a PM failure or a process failure. The gap is inherent in the relationship between specification and experience. Even a hypothetically perfect upfront process only produces a partial spec, because the interesting edge cases emerge on human contact with the product.

Waterfall, agile-with-a-big-PRD, spec-driven development, one-shot vibe coding with an AI agent with a detailed prompt: these workflows all hit the same failure mode. You can’t specify everything that matters up front.

Testing as investigation

If the spec can’t get you to the right product, what can?

The answer is testing - not as in writing tests for verification (does it match the spec?), but as in the discipline of targeted experimentation (what happens if?).

A skilled testing practitioner knows how to interrogate the product in a way that surfaces the oddness that you didn’t specify ahead of time. A good test can change your opinion of what “good” means for your product - allowing you to update your models and informing the next round of investigation. The spec, if you keep one, becomes a record of what you’ve discovered. A living descriptive map, not a fixed prescriptive one.

Or you could opt for the “test in production” approach. Treat every interaction between your system and real humans as a test, and engineer it in such a way that failures are informative and not catastrophic.

When something goes sideways in front of a real user, the instinct is to say we missed a requirement. The tester’s angle is to say the territory has a feature the map didn’t. The answer is not to go back to some theoretical product management drawing board, but to continue to explore the interaction between human and software until we understand what actually happens in the real world and can update the product to fit the people.

Our reverse-Swiss-cheese model also tells us that the work of making software actually feel right is irreducibly fiddly. You’re not hunting one showstopper bug that affects everyone. You’re hunting hundreds of small bugs, each of which matters to a different user in the place where they’ve deviated from your assumptions. Getting to software that your users trust is the cumulative outcome of many rounds of investigative, creative testing.

Can AI models learn tacit knowledge?

All of what I’ve said here is about why humans can’t write perfect specs. Will an AI be able to legibilise the illegible?

I’m not an AI capabilities pessimist - AIs are not restricted to only ever being as good as humans. Current AI models can learn tacit knowledge, with the right training data. Diagnostic AIs that look at images can learn patterns that the doctors don’t know how to describe through supervised learning. The resulting AIs can exceed human performance at those same tasks.

But AIs can only acquire this intuition when they have learned from good examples. All the major AI labs are working on this problem: finding domains where they can generate good examples of “correctness”, in order to use them as training data. The domains where this has been easiest (maths problems, for example) are the domains where we’re seeing the most impressive post-training results for LLMs.

But a labelled training data set for “what makes software feel right to particular humans” doesn’t exist yet, and constructing one across all the quality dimensions of all software is a hard problem. I expect this to get chipped away at one dimension at a time - AIs are already doing pretty well at getting the visual vibe of UI right, for example, because we trained AIs to imitate human taste at what looks good on a website.

There will be a day when we manage to teach our agents to work in a way that details all our best engineers’ tacit knowledge about software engineering, and all humans’ tacit knowledge about user behaviour. Until then, we don’t have an agent that will write the perfect spec for us. Which brings us back to the cockpit.

There is no mainline user

The mainline user is as mythical as the average pilot. You can’t one-shot a spec, no matter how much you model different user classes. You can only build a cockpit that adjusts, watch real people sit in it, and keep adjusting: because every user sits differently, and the ways they sit differently aren’t the ways you expected.

In the era of cheap code, this is a big chunk of the remaining work. The model can write the function you asked for. The effort is figuring out whether the thing you asked for is actually right when real humans get to it.

Originally published as an article on X. Follow Yanqing for more on AI coding and software quality.