The Importance of Being Lazy

One of the most overlooked skills as a software engineer is laziness. It’s the ability to ask “do we really need to?” before every decision, every task.

Many of us engineers like to talk about simplicity. We get that simplicity leads to better quality. Less code is easier to reason about. Quicker to implement. Easier to test. Presents a smaller surface for bugs. For security exploits.

And yet.

Most products I’ve seen are over-complex. Byzantine architectures with myriad components. Flow diagrams with spaghetti mazes of arrows. Features that may no longer be used in the field, but which are kept because it is too expensive to find out if anyone cares.

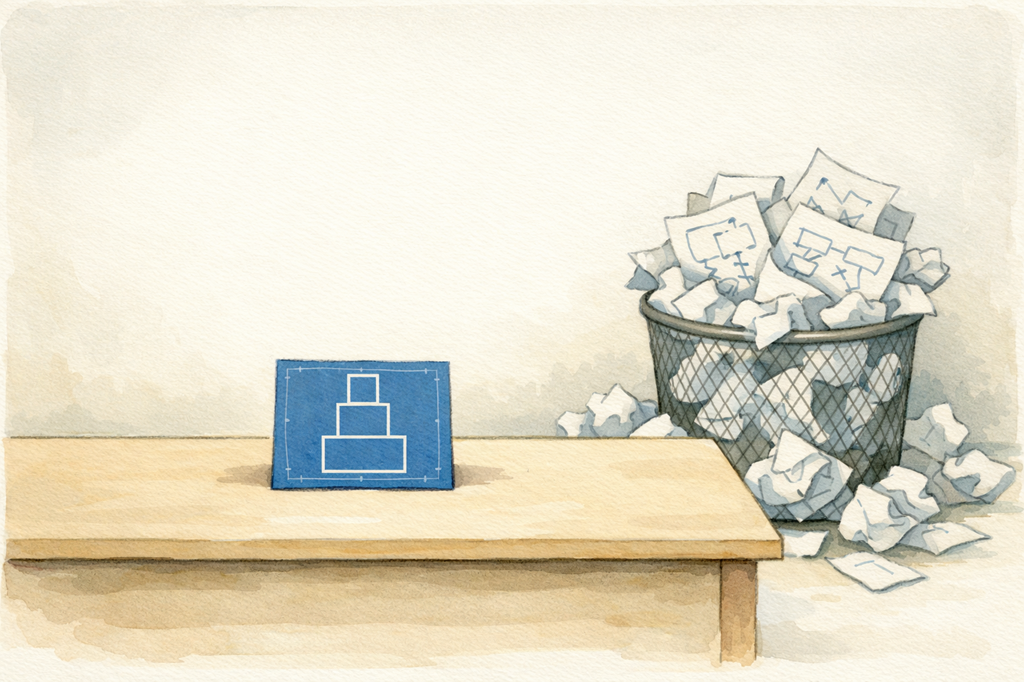

Unfortunately, engineers are incentivized to produce complex designs. Why? Because a truly simple design looks obvious. It doesn’t gain the respect something more complex would. If you produce an architecture diagram full of boxes and arrows it’s obvious what you’ve been doing. But spend 3 months to produce a diagram with three boxes stacked on top of each other? Questions will be asked.

Which is why laziness matters. It’s the last line of defense against creeping complexity. Having a “I don’t want to” attitude pays dividends. Years ago it stopped my team adding a third server type (personally, I thought having two types was already one too many). It stopped us adding features no one would ever use. It stopped us “future proofing” by adding APIs that would never be used.

The problem is most engineers are too scared to tell their boss “I don’t want to.” I get it. It isn’t an obvious career move. And being lazy producing better quality? That’s another non-obvious leap.

Problem is, our AI coding friends have learnt from us. Like us, they talk a good talk about simplicity and quality. But in practice? Not so much.

The emulator

Over the past few weeks I’ve noticed the emulator I’ve been building get slower. And that’s despite Claude & Codex spending hours allegedly “improving” the performance. The main emulation loop is inspired by Dosbox and is delightfully simple:

- Execute program instructions for 1 ms.

- Do housekeeping (dispatch interrupts, update timers etc).

- Repeat.

This is the model the emulator has used from the start. No need to reinvent wheels. But it occurred to me I should probably check with Claude if we had strayed…

Hmm. A sophisticated nuance? My spidey sense is tingling. This feels like yet another engineer making the “complexity == good” pitch. Time to go digging.

It quickly became apparent this was more than a “nuance”. Nor was it sophisticated. Complex, yes. But sophisticated? No. Over time things had been added. Sometimes to fix bugs. Sometimes to “improve” performance. Net result? The main loop was now a horrifying muddle. Multiple places handling interrupts. PIT timing getting updated in multiple places and very difficult to reason about. A Bresenham accumulator had appeared. Why did we need one? And what even is a Bresenham accumulator?

So I got Claude to go fix. We agreed a clear, simple design. I pointed Claude at the long standing guidance in claude.md advising against premature optimisation. And, despite all this, the next iteration retained a large chunk of the original timing code, but now in a separate non-mainline function. Why? Because 200 test cases relied on it. Claude kept the old code so those tests would keep passing. Err? Might there be a simpler solution?

It’s an example of a thing I’ve noticed with the models - they like to keep old code around “just-in-case.” Sometimes it’s behind a feature gate. Sometimes an environment variable. I hate it. Old code expands the surface area where bugs and security exposures can thrive. If you don’t need it, get rid of it. If the worst comes to the worst it’s still in git.

But why do models behave like this?

I reckon it’s because they’ve been trained on human experiences. They’ve learnt humans like to keep old code around, just in case. Somehow it’s become associated with good practice.

And it gets worse. Since the models have only seen human estimates for task lengths, those are the units they default to. Claude will regularly tell me a piece of refactoring will take months, when I know it can do it overnight (in fairness Opus 4.6 is much better than 4.1 in this respect, but it’s not solved).

Assuming human pace leads to complex multi-part implementation plans. Which make no sense if you can make the change overnight. Then, there’s the deep grained assumption all code is deployed in production (even when explicitly told it isn’t) - which leads to detailed migration and rollback strategies. And more complex implementation plans. To some degree this is controllable via claude/agents.md. And regular reminders via prompting. But not entirely - the human priors are hard for the models to overcome.

And so?

The models need to get lazier. By which I mean think more. And code less. They need to refuse to implement migration or rollback plans unless they are convinced of the need. Resist the temptation to add feature gates or environment variables. Accept they’re unlikely to need Bresenhams algorithm, no matter how cool it might sound. The world of software engineering is changing - we can do more, faster. There’s the potential to produce better quality software than ever before. But the models need to learn; simply copying the priors of the humans who came before isn’t sufficient. So, for now, I’ll still be here. Deleting things and forcing Claude to be lazy.

PS A Bresenham accumulator is a line drawing algorithm. Quite why we needed one in a timing algorithm remains a mystery.

Originally published on Martin Davidson’s Substack. Follow Martin for more on AI and software engineering.