How Fast Will AI Get Better at Software?

I see lots of takes online from people who are saying that AIs will never be able to write software because look at how much it sucks at it right now: look how un-maintainable the code is and look how much it gets lost in a complicated legacy codebase. On the other side of exaggerated takes, I see a lot of people saying, AIs have already “solved software”, or they’re going to solve software very shortly and you better start laying off all your software developers now.

Clearly, the story is somewhere in between. AI is already very capable at some software tasks - like spinning up a greenfield web app demo from a prompt. The interesting question is, how good is it going to get, and how quickly? How much change are we going to see in the industry in the next few years? How worried should we be about our jobs?

I think for answering that question, it’s more interesting to look at the rate of change of AI capability rather than trying to take a point estimate of where it is right now. So, how do we go about measuring the rate of change?

The two-year pattern

There’s an interesting trend when you look at other domains of AI capability, that for any given task, it takes about two years to go from “it’s a party trick, we can just about have the AI do this with a lot of hand holding,” to “it’s on par with humans or even better.”

For example, let’s look at image generation.

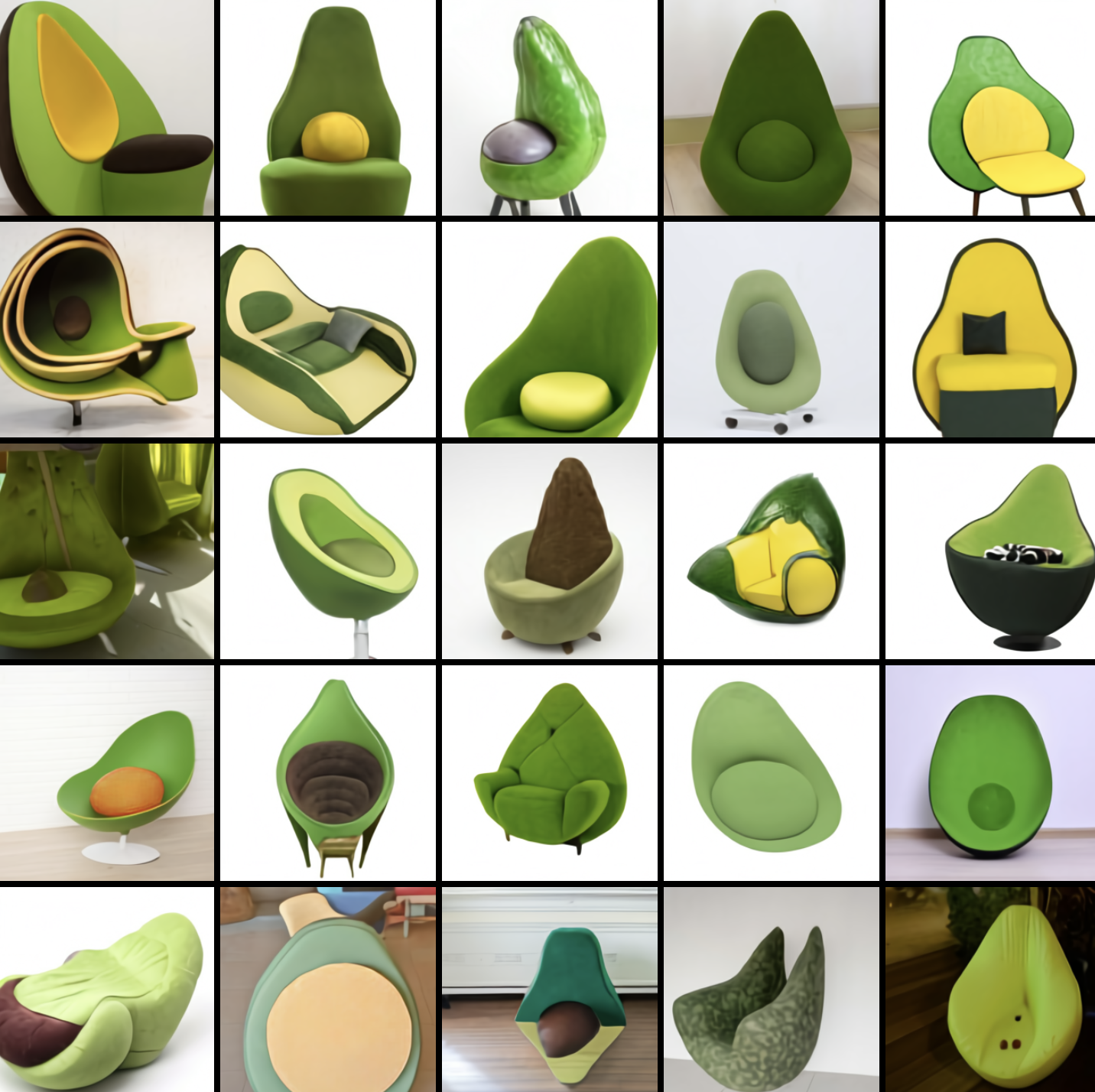

The first generation of DALL-E by OpenAI was launched in January 2021. It seems hard to remember those early days now. There was a lot of excitement - depending on your prompt you could get somewhere between “blobs vaguely reminiscent of the thing you want” and “picture that is mildly grotesque but recognisably what you asked for”. It was only just about good enough to cherry-pick a handful of good-looking examples for OpenAI’s blog post - definitely a party trick. Now if you try and wind time forwards to the point where we really see AI image generation getting to human-tier, then we’re probably looking at Midjourney v5 in March 2023. The “pope drip” image of pope Francis in a puffer coat was good enough that it went viral and fooled many people. So AI image generation went from party trick to “lots of people can’t tell it’s fake” in about two years.

The other great example to look at right now is video generation. Last week, everybody was talking about SeeDance 2, which gives you 20 seconds of coherent, multi-shot, cinematic video from a simple prompt. You can generate realistic adverts just from an Amazon product page. And if you wind back time by about two years, in December 2023 were the first Sora demos, and that was on par with DALL-E 1. A bit of a party trick, not particularly good, but the hint of something was there, and it takes about two years to get that to really good.

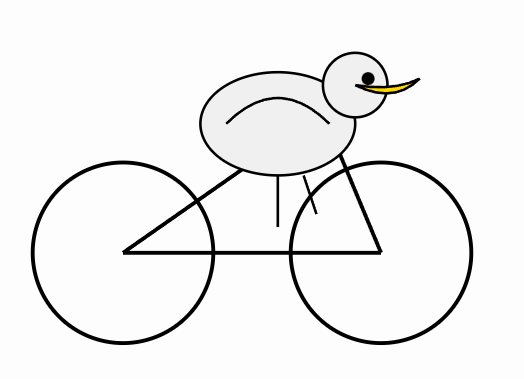

How about a third example, a very silly benchmark: asking models to draw an SVG of a pelican riding a bicycle. Claude 3.5 Sonnet (June 2024) could produce something maybe somewhat recognisable as a pelican, but very, very janky. And right now, February 2026, Gemini 3 Deep Think is able to draw a really very convincing pelican, convincingly riding a bicycle. A little under two years, and a similar pattern of progress.

My understanding is that this is a somewhat common trend across different AI domains - image classification, protein folding, game-playing, translation, speech recognition - most narrow benchmarks go from “party trick” to near saturated in a couple of years.

So what happened to coding?

This raises the question: so what happened to coding? OpenAI Codex was launched in 2021, and that’s five years ago now, so why isn’t software solved? I think the key piece to understand here is that software is a collection of very different tasks with very different scopes. The two-year timeline only holds when you’re looking at specific, narrow tasks: making an image from a prompt, making a short clip from a prompt, making an SVG of a pelican. The narrower the benchmark, the shorter the timeline.

When you look at a specific, narrow task within software, you do see this two-year pattern holding. If you look at performance on a single coding task, for example, solving a medium LeetCode problem, then you see that, with the first version of Codex in 2021, the benchmark has about a 30% pass rate. I would put that at party trick territory. And by early 2024, after about two and a half years, the LLMs were scoring 80% on Medium, and I think that’s sort of reasonably on par with humans.

Where are we now?

When we look at software engineering as a whole, then we need to be specific about the task that we are evaluating. For any tasks where today we are at the party trick stage - for example, the Opus written C compiler that I blogged about previously - then we would expect that on this task it will be human level within a couple of years and better than human level in three years or so. For tasks where we are not even at party trick territory yet, then it’s probably going to take a bit longer.

My thinking is that there are still narrow tasks in software engineering that the current generation of agents can’t do at all. For example, exerting top-level quality judgments without human prompting, or noticing things that surprise them when they’re exploratory testing and acting on them. My experience is that when it comes to matters of judgement in complex codebases, we are “pre-party-trick”. So for now, there is still a lot of work for human coders and testers to do in filling in that judgement gap, at least for a couple more years. I wouldn’t write off all of our careers yet.

We probably do want to be making plans for what we’re all going to do in five years’ time, but 5 years is an eternity in AI capabilities. Roughly every five years there’s some big novel technique (transformers, for example) that takes AI capabilities into new realms, so it’s pretty hard to reason that far ahead. Also, in five years’ time we’ll have had two years twice, so many things that AI can’t even slightly begin to do now will be easy by then.

The threshing machine moment

So what does this mean for us in the software industry right now?

In Dario Amodei’s recent essay, “The Adolescence of Technology” he talked about the farmer analogy of humans’ productivity with technology.

Initially, farmers had ploughs which were just augmenting them a little bit. Then at the point where tools could autonomously do a sub-task of farming, individual farmers became 10x, 20x more powerful with their threshing machines, because they could focus just on the high leverage part of the job. Eventually, combine harvesters came along, and we had to rethink how farming was done, and the farmer workforce was reduced by 90% as traditional farming methods became obsolete.

I think we’re at the threshing machine stage of software engineering. It’s unarguable that there are many specific sub-tasks that AI is now beginning to automate, and within two years AI will be able to do large and substantial chunks of the job: but probably still quite not all of it. We have a window right now where we, as experienced software developers and quality practitioners, are all extremely high-leverage.

When the combine harvesters of software engineering come along we will all need to find something else to do. But until then, the moment we’re in is real, and you can have more impact now than you have ever been able to, powered by AI.

Originally published as a article on X. Follow Yanqing for more on AI coding and software quality.