Cheerful Mediocrity

Being part of a large dev team is a lot of fun. Mornings bring a new build - a chance to see the new features others have been working on. And a moment of truth - did your changes build? Do they work?

But it’s not always plain sailing - a big team moving fast means things break. Some days it feels like two steps forward, one back. Excitement. And frustration.

Using Claude Code, Codex & Gemini reminds me of those days. Except it’s the agentic harnesses which are bringing back the memories. The pace of change is frenetic - the tools update at least daily. And the quality is, well, variable.

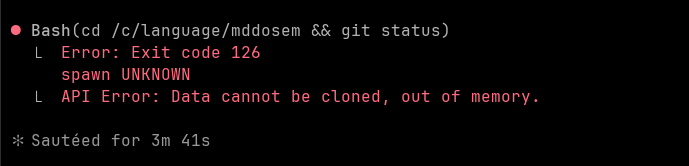

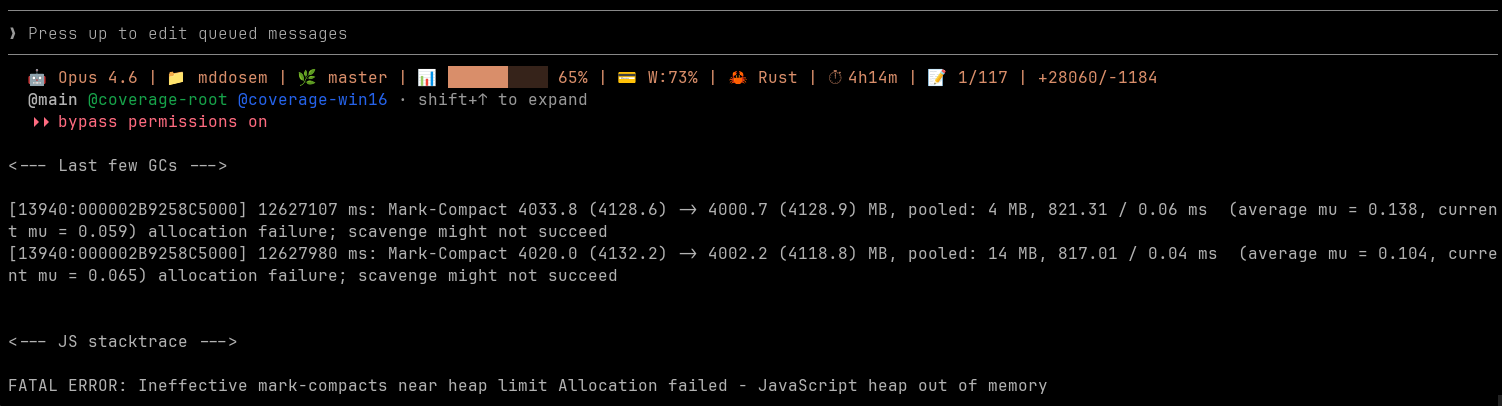

Memory leaks and crashes abound.

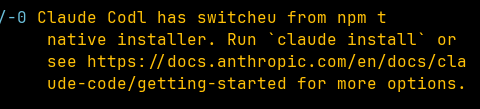

There are weird display bugs - what is Claude Codl?

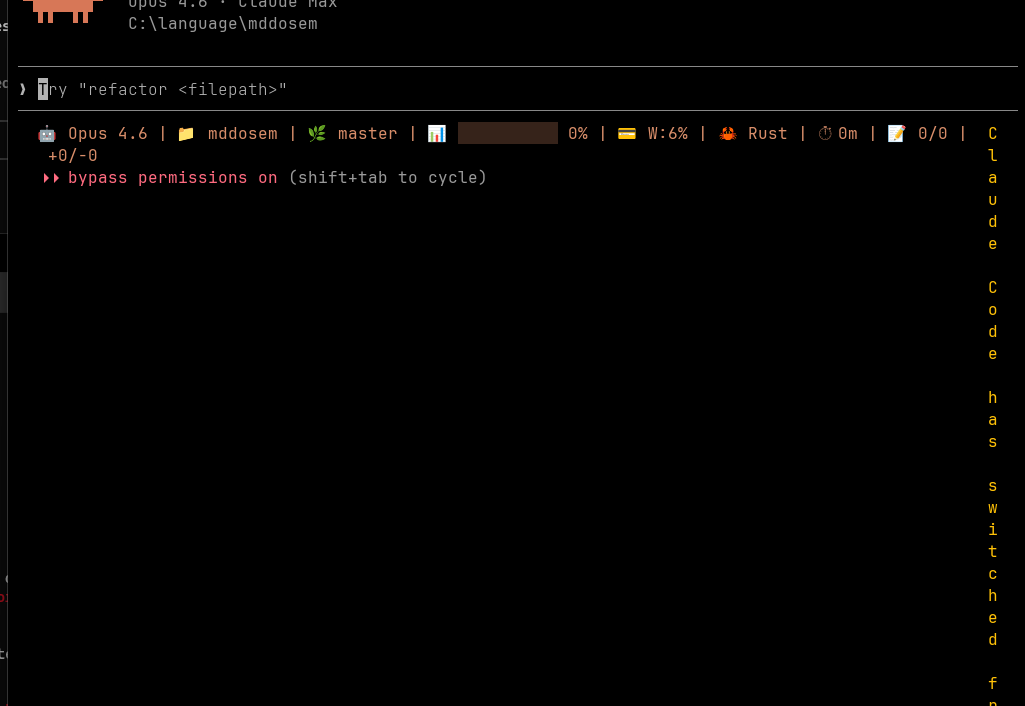

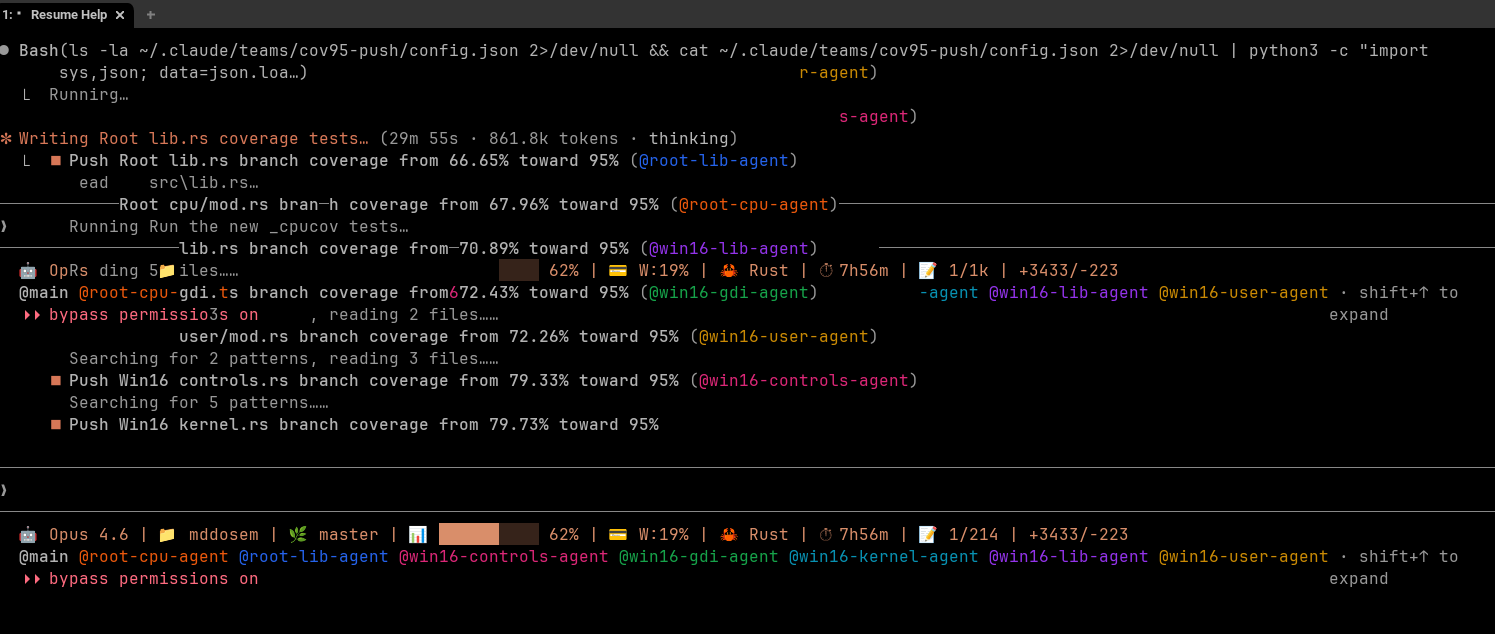

This output is colourful. But confusing.

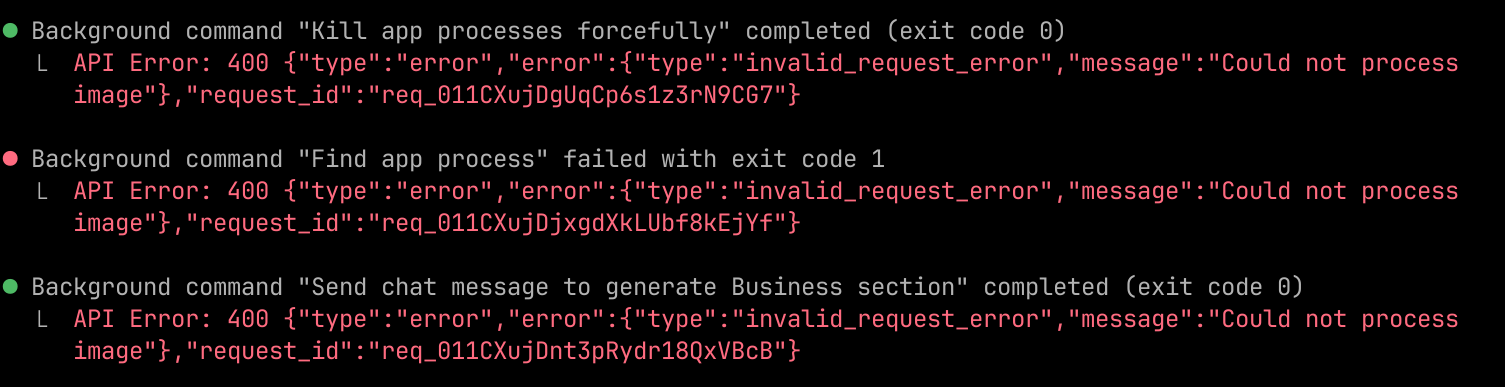

Claude will sometimes load files that break the context.

Which turned out to be a bad image file that tripped up the API repeatedly.

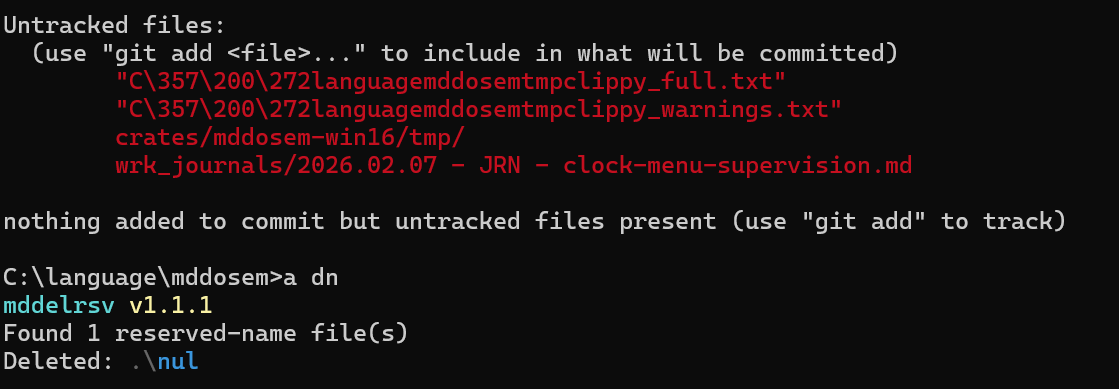

Claude also likes dropping “nul” files on Windows (which Windows is unable to clean-up - so I got Claude to build a tool to remove them). And then there’s the accidental misnaming of files. “C\357\200\272” anyone?

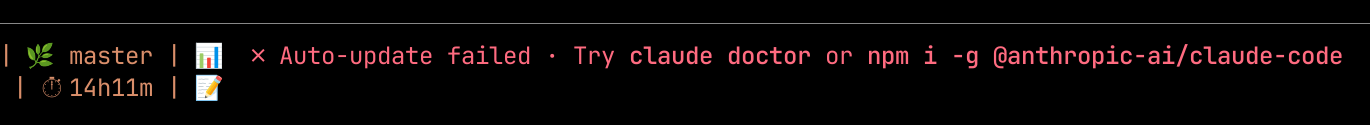

Auto update often fails. And sometimes nukes the Claude launcher as well.

But it’s all part of the fun of playing with the latest toys. It’s not called the bleeding edge for nothing.

The problem

There’s a problem though. AI is meant to make development better. So why is Claude Code so, well, buggy? It’s not a great advert for AI, is it?

There are others. Take FastRender, Cursor’s AI browser. It all sounded so impressive - a swarm of agents creating 2M loc and an apparently working browser. Except that the published code didn’t compile. And the architecture was, well, not great. It so infuriated this person that they repeated the experiment - fewer agents, more direction. This time the result was 20kloc and a working browser.

So AI can do great things. And it can do bad things.

In some ways this is an age old problem. Developers like to, well, develop. But software engineering is not about development. It’s about problem solving - creating solutions to solve real world problems. Quality matters. And understanding what quality is - and how to achieve it - is hard.

Left to its own devices Claude is good at the development part. The quality part? Less so.

It’s not enough to say “high quality”. What does that mean? Some things are mutually exclusive. You might decide quality means having highly detailed in depth diags. And it means having high performance with minimal storage. But you can’t have both - you need to decide where you make trade-offs. As my good friend Edmund Pringle is wont to say - quality is value to someone who matters.

Nor is quality an afterthought. It starts right at the beginning of the project. The language you choose, the frameworks, the architecture, the codebase structure. They all affect the long-term.

Take Claude Code. It often prefers to write in Python. Python is lovely, but it was designed as an accessible language for humans. It trades correctness for ease of coding. It’s very easy to shoot yourself in the foot. But you can do it quickly.

I’m opinionated - to me all new AI generated code should be in Rust. Rust removes whole classes of bugs. Memory leaks, scribblers, concurrency issues. Things I’ve spent hours debugging - no longer an issue. Rust is hard - really hard - for humans. It’s no surprise Rust devs earn ~25% more than average SWEs. But it’s easy for AI.

Design matters - having a clear idea of the architecture, component breakdown, main flows - they all matter. Yet Claude will happily jump into coding without a design. Sometimes it’ll produce a plan first, but the plans can be thin. You need to direct the model to spend more tokens - more thinking time - on the design and get it solid before letting it write code.

And then there’s testing. AI makes it cheap to have massive test suites. Historically most projects have, at best, a 1:1 ratio between test and production code. But AI will happily write tests all day and all night. You can parallelize test generation. You can run fuzz testing, property testing, MC/DC testing. You can get 100% line, function, region, branch coverage. But that doesn’t happen by default - if you’re lucky Claude might write a few test cases. But you can - should - push for more.

Take the 386 emulator I’ve been building. It has 32,000 UTs. It has multiple external conformance oracles: 80386 single-step traces, Wine’s Win16 test binaries, Michael Abrash’s VGA demos, suites of DOS apps, Windows 3.1 apps. A coverage-guided fuzzing infrastructure with 29 fuzz targets employs four differential testing strategies, pitting the JIT compiler against the interpreter, the interpreter against QEMU/Unicorn, and Win16/DOS emulation against Wine and DOSBox-X respectively. An adaptive budget fuzzer learns which targets find bugs most effectively and allocates compute time accordingly. Coverage sits at 96-100% for line & branches depending on the module.

None of this happened by accident. Every one of those decisions - Rust, differential testing, coverage targets - was a human call. The AI did the heavy lifting. I did the thinking.

And so?

These days it seems like everyone is obsessed with making AI code faster. We’ve got agents teams, swarm coding, endless frameworks. But the bottleneck isn’t generation - it’s knowing what quality means for your project. And having the bloody-mindedness to enforce it. Those big dev teams? They had something AI teams don’t - shared standards, a collective pride. The embarrassment when someone called you out on sloppy code. Your AI agents won’t. They’ll cheerfully produce whatever you tolerate. The tools are getting better every day. The humans using them… are they?

Originally published on Martin Davidson’s Substack. Follow Martin for more on AI and software engineering.